SPECsfs2008_nfs.v3 Result

|

NetApp, Inc.

|

:

|

FAS6080 (FCAL Disks)

|

|

SPECsfs2008_nfs.v3

|

=

|

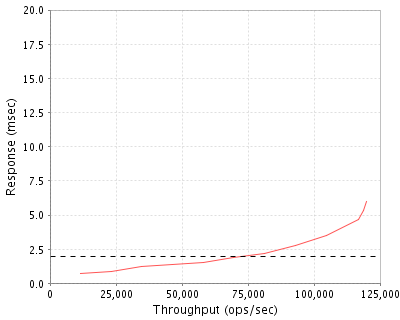

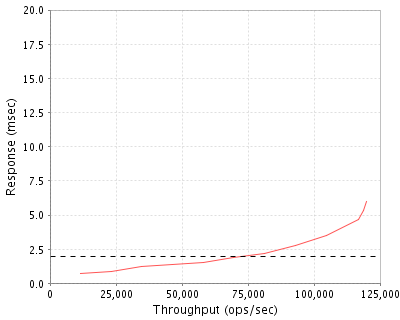

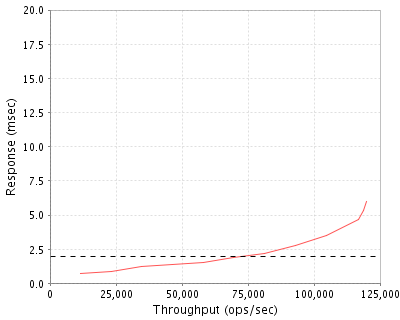

120011 Ops/Sec (Overall Response Time = 1.95 msec)

|

Performance

Throughput

(ops/sec)

|

Response

(msec)

|

|

11553

|

0.7

|

|

23145

|

0.9

|

|

34770

|

1.2

|

|

46401

|

1.4

|

|

57994

|

1.5

|

|

69632

|

1.9

|

|

81307

|

2.2

|

|

92969

|

2.8

|

|

104646

|

3.5

|

|

116700

|

4.7

|

|

118579

|

5.3

|

|

120011

|

6.0

|

|

|

Product and Test Information

|

Tested By

|

NetApp, Inc.

|

|

Product Name

|

FAS6080 (FCAL Disks)

|

|

Hardware Available

|

December 2007

|

|

Software Available

|

September 2009

|

|

Date Tested

|

August 2009

|

|

SFS License Number

|

33

|

|

Licensee Locations

|

Sunnyvale, CA

USA

|

The NetApp(R) FAS6000 represents the top of the line of the FAS family of storage systems with NetApp's unified storage architecture. It features two models: the FAS6040 and FAS6080. FAS6000 performance is driven by a 64-bit architecture that uses high throughput, low latency links and PCI Express for all internal and external data transfers. With our FAS6000 series and Data ONTAP 8.0 you can efficiently consolidate SAN, NAS, primary, and secondary storage on a single platform. You get the ultimate in scalability, versatility, and availability when you use our flagship FAS6000 series. Data ONTAP 8.0 is designed to provide customers with the next generation of features and functionality to ensure they are able to meet the demands of their increasingly virtualized workloads. The FAS6080 system used in this test was configured using the new larger aggregate capability. We've designed our systems to make them easy for you to install, configure, manage, and upgrade so you can quickly adapt your storage infrastructure to meet your changing business needs. To help you maximize staff productivity, all of our FAS systems share a unified storage architecture based on the Data ONTAP(R) operating system and use our integrated suite of application-aware manageability software. You can minimize the use of data center resources including power, cooling, and floor space-by taking advantage of our comprehensive set of storage-saving software features in Data ONTAP like Deduplication and Thin Provisioning (FlexVols). Additionally, our FlexShare software is a powerful quality-of-service tool for Data ONTAP(R) storage systems. It lets you assign individual priorities to multiple application workloads consolidated on a single system - you can tune how your system should prioritize its resources during conditions of high system utilization. When you add massive scalability to other characteristics of the FAS family, the result is our FAS6000 series, the ideal platform for the largest applications and storage consolidations.

Configuration Bill of Materials

|

Item No

|

Qty

|

Type

|

Vendor

|

Model/Name

|

Description

|

|

1

|

2

|

Storage Controller

|

NetApp

|

FAS6080A-IB-BS2-R5

|

FAS6080A ACT-ACT System

|

|

2

|

24

|

Disk Drives w/Shelf

|

NetApp

|

X94015A-ESH4-R5-C

|

DS14MK4 SHLF,ACPS,14x300GB,15K,HDD,ESH4,-C,R5

|

|

3

|

2

|

10 Gigabit Ethernet Adapter

|

NetApp

|

X1008A-R6

|

NIC,2-Port,10GbE,Fiber,PCIe,R6

|

|

4

|

2

|

Software License

|

NetApp

|

SW-T7C-NFS

|

NFS Software,T7C

|

Server Software

|

OS Name and Version

|

Data ONTAP 8.0

|

|

Other Software

|

None

|

|

Filesystem Software

|

Data ONTAP 8.0

|

Server Tuning

|

Name

|

Value

|

Description

|

|

vol options 'volume' no_atime_update

|

on

|

Disable atime updates (applied to all volumes)

|

Server Tuning Notes

N/A

Disks and Filesystems

|

Description

|

Number of Disks

|

Usable Size

|

|

300GB FCAL 15K RPM Disk Drives

|

324

|

65.4 TB

|

|

Total

|

324

|

65.4 TB

|

|

Number of Filesystems

|

2

|

|

Total Exported Capacity

|

64.64 TB

|

|

Filesystem Type

|

WAFL

|

|

Filesystem Creation Options

|

64-bit aggregate option was selected during creation of the aggregates that housed the SFS filesystems on each controller.

|

|

Filesystem Config

|

Each filesystem was striped across 162 disks

|

|

Fileset Size

|

14002.2 GB

|

The storage configuration consisted of 24 shelves, each wth 14 disks. Groups of four shelves were daisy-chained such that the outputs of each shelf was attached to the inputs of the next shelf in the group. The first shelf in each group had two 4Gbit/s FC-AL loop connections, each one connected to one of six FC-AL ports (integrated on the mainboard) on a different storage controller. Each storage controller was the primary owner of 12 shelves, with 162 disks in those shelves placed into a single 64-bit aggregate. Each aggregate was composed of 9 RAID-DP groups, each RAID-DP group was composed of 16 data disks and 2 parity disks. Within each aggregate, a flexible volume (utilizing Data ONTAP FlexVol (TM) technology) was created to hold the SFS filesystem for that controller. Each volume was striped across all disks in the aggregate where it resided. Each controller was the owner of a single volume, but the disks in each aggregate were dual-attached so that, in the event of a fault, they could be managed by the other controller via an alternate loop. A separate flexible volume residing in a three-disk root aggregate on each controller was created to hold the Data ONTAP operating system and system files. The remaining three disks owned by each controller were reserved for spares.

Network Configuration

|

Item No

|

Network Type

|

Number of Ports Used

|

Notes

|

|

1

|

Jumbo Frame 10 Gigabit Ethernet

|

2

|

Dual-port 10 gigabit ethernet PCIe adapter

|

Network Configuration Notes

There was a single, dual-port 10 gigabit ethernet network adapter configured on each storage controller. Only one interface on each controller was enabled during the test. The active interfaces were configured to use jumbo frames (MTU size of 9000 bytes). All network interfaces were connected to a Cisco 6509 switch, which provided connectivity to the clients.

Benchmark Network

An MTU size of 9000 was set for all connections to the switch. Each load generator was connected to the network via a single 1 GigE port, which was configured with 2 separate IP addresses on separate subnets.

Processing Elements

|

Item No

|

Qty

|

Type

|

Description

|

Processing Function

|

|

1

|

8

|

CPU

|

2.6GHz AMD Opteron(tm) Processor 885, 1MB L2 cache

|

Networking, NFS protocol, WAFL filesystem, RAID/Storage drivers

|

Processing Element Notes

Each storage controller has four physical processors, each with two cores.

Memory

|

Description

|

Size in GB

|

Number of Instances

|

Total GB

|

Nonvolatile

|

|

Storage controller mainboard memory)

|

32

|

2

|

64

|

V

|

|

Non-volatile memory on NVRAM PCIe adapter

|

2

|

2

|

4

|

NV

|

|

Grand Total Memory Gigabytes

|

|

|

68

|

|

Memory Notes

Each storage controller has main memory that is used for the operating system and for caching filesystem data. A separate, battery-backed RAM module on a separate PCIe adapter is used to provide stable storage for writes that have not yet been written to disk.

Stable Storage

The WAFL filesystem logs writes and other filesystem modifying transactions to the NVRAM adapter. In an active-active configuration, as in the system under test, such transacations are also logged to the NVRAM on the partner storage controller so that, in the event of a storage controller failure, any transactions on the failed controller can be completed by the partner controller. Filesystem modifying NFS operations are not acknowledged until after the storage system has confirmed that the related data are stored in NVRAM adapters of both storage controllers (when both controllers are active). The battery backing the NVRAM ensures that any uncommitted transactions are preserved for

at least 72 hours.

System Under Test Configuration Notes

The system under test consisted of two FAS6080 storage controllers and 24 storage shelves, each with 14 300GB FC-AL disk drives. The two controllers were executing Data ONTAP 8.0 software operating in 7G mode. They were configured in an active-active cluster failover configuration, using the high-availability cluster software option in conjunction with an InfiniBand cluster interconnect provided on the NVRAM adapter. One dual-port 10 gigabit ethernet host bus adapter was present in a PCI-e expansion slot on each storage controller. The storage shelves were configured in groups of four shelves, which were connected to each other via two 4Gbit/s FCAL connections. The first shelf in each group had two 4Gbit/s FC-AL input connections, one to each storage controller. The system under test was connected to a 10 gigabit ethernet switch via 2 network ports (one active port per dual-port network adapter on each storage controller, with the second port unused).

Other System Notes

All standard data protection features, including background RAID and media error scrubbing, software validated RAID checksumming, and double disk failure protection via double parity RAID (RAID-DP) were enabled during the test.

Test Environment Bill of Materials

|

Item No

|

Qty

|

Vendor

|

Model/Name

|

Description

|

|

1

|

25

|

IBM

|

IBM eServer LS21 Blade

|

Bladecenter 14 blade chasis with 1GB RAM and Linux operating system

|

|

2

|

1

|

Cisco

|

6509

|

Cisco Catalyst 6509 Ethernet Switch

|

Load Generators

|

LG Type Name

|

LG1

|

|

BOM Item #

|

1

|

|

Processor Name

|

Dual-Core AMD Opteron Processor 2216 HE

|

|

Processor Speed

|

2.4 GHz

|

|

Number of Processors (chips)

|

1

|

|

Number of Cores/Chip

|

2

|

|

Memory Size

|

1 GB

|

|

Operating System

|

RHEL4u5 kernel 2.6.9-55.0.2.ELsmp

|

|

Network Type

|

1 x Broadcom NetXtreme II Gigabit Ethernet BCM5706S(Rev 02)

|

Load Generator (LG) Configuration

Benchmark Parameters

|

Network Attached Storage Type

|

NFS V3

|

|

Number of Load Generators

|

25

|

|

Number of Processes per LG

|

26

|

|

Biod Max Read Setting

|

8

|

|

Biod Max Write Setting

|

8

|

|

Block Size

|

AUTO

|

Testbed Configuration

|

LG No

|

LG Type

|

Network

|

Target Filesystems

|

Notes

|

|

1..25

|

LG1

|

1

|

/vol/vol1 /vol/vol2

|

N/A

|

Load Generator Configuration Notes

All filesystems were mounted on all clients, which were connected to the same physical and logical network.

Uniform Access Rule Compliance

Each load-generating client hosted 26 processes. The assignment of processes to filesystems and network interfaces was done such that they were evenly divided across all filesystems and network paths to the storage controllers. The filesystem data was striped evenly across all disks and FC-AL loops on the storage backend.

Other Notes

NetApp is a registered trademark and "Data ONTAP", "FlexVol", and "WAFL" are trademarks of NetApp, Inc. in the United States and other countries. All other trademarks belong to their respective owners and should be treated as such.

Config Diagrams

Generated on Tue Aug 25 15:46:57 2009 by SPECsfs2008 HTML Formatter

Copyright © 1997-2008 Standard Performance Evaluation Corporation

First published at SPEC.org on 25-Aug-2009