SPECsfs2008_nfs.v3 Result

|

Huawei

|

:

|

OceanStor N8500 Clustered NAS Storage System, 24 nodes

|

|

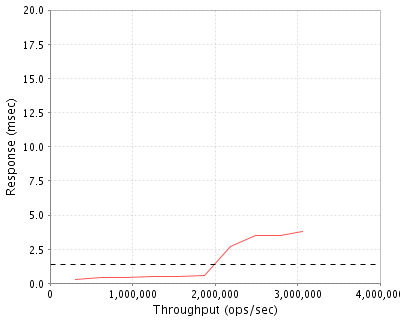

SPECsfs2008_nfs.v3

|

=

|

3064602 Ops/Sec (Overall Response Time = 1.39 msec)

|

Performance

Throughput

(ops/sec)

|

Response

(msec)

|

|

310130

|

0.3

|

|

621075

|

0.4

|

|

931969

|

0.4

|

|

1243548

|

0.5

|

|

1554753

|

0.5

|

|

1868974

|

0.6

|

|

2183089

|

2.7

|

|

2495548

|

3.5

|

|

2790350

|

3.5

|

|

3064602

|

3.8

|

|

|

Product and Test Information

|

Tested By

|

Huawei

|

|

Product Name

|

OceanStor N8500 Clustered NAS Storage System, 24 nodes

|

|

Hardware Available

|

July 2012

|

|

Software Available

|

July 2012

|

|

Date Tested

|

September 2012

|

|

SFS License Number

|

9021

|

|

Licensee Locations

|

West Zone Science Park of UESTC,No.88,Tianchen Road,Chengdu

CHINA

|

The N8500 Clustered NAS Storage System is an advanced, modular, and unified storage platform (including NAS, FC-SAN, and IP SAN) designed for high-end and mid-range storage applications. Huawei OceanStor N8500 is a professional integrated storage solution that optimally utilizes storage resources. Its superior performance, reliability, ease of management, and flexible scalability maximize its cross-field application potential to span areas such as high-end computing, databases, websites, file servers, streaming media, digital video surveillance, and file backup.

Configuration Bill of Materials

|

Item No

|

Qty

|

Type

|

Vendor

|

Model/Name

|

Description

|

|

1

|

12

|

Engine Nodes

|

Huawei

|

N8500-EHS-N2M384G-DC

|

OceanStor N8500 Clustered NAS Storage System(including two internal nodes, totally 4*CPU and 384GB memory)

|

|

2

|

1

|

Fibre channel switch base module

|

Huawei

|

SN1-BASE

|

Huawei SNS5120 FC Switch,Base Module(AC,Dual CPU,Dual-Power Supply,Dual Fan)

|

|

3

|

6

|

Fibre channel switch I/O Blade

|

Huawei

|

SN1Z01FCIO

|

Huawei SNS5120 FC Switch,I/O Blade(8/4/2Gb FC,16 Ports,with 16*8Gb SFPs)

|

|

4

|

6

|

N8500 storage unit

|

Huawei

|

STTZ14SPES

|

OceanStor Dorado5100 High Performance Solid State Storage System Controller Enclosure,with HSSD Controller System Software

|

|

5

|

12

|

N8500 storage unit

|

Huawei

|

STTZ08DAE24

|

High Performance Solid State Storage System Disk Enclosure,2U,AC,400GB per SSD

|

|

6

|

6

|

N8500 storage unit

|

Huawei

|

SPE61C0200-S62-2C96G-DC-BASE

|

OceanStor S6800T Controller Enclosure(Dual Controller,DC,96GB Cache,with Huawei Storage Array Control System Software)

|

|

7

|

24

|

N8500 storage unit

|

Huawei

|

DAE12435U4-AC

|

DAE12435U4-03 Disk Enclosure(4U,3.5",AC,SAS Expansion Module)

|

|

8

|

144

|

Disk drive

|

Huawei

|

eMLC400G-S-3

|

HSSD eMLC 400GB SAS disk solid state Drive(3.5")

|

|

9

|

576

|

Disk drive

|

Huawei

|

SATA2K-7.2K

|

2000GB 7.2K RPM SAS-SATA Disk Drive(3.5")

|

|

10

|

1

|

10GE network switch frame

|

Huawei

|

CE12804-C

|

Huawei CloudEngine 12804 universal integrated cabinet

|

|

11

|

2

|

10GE network switch blade

|

Huawei

|

CE-L48XS-EA

|

Huawei CloudEngine 12804 48-Port 10GBASE-X Interface Card(EA,SFP+)

|

Server Software

|

OS Name and Version

|

N8500 Clustered NAS Engine Software V200R001

|

|

Other Software

|

Integrated Storage Management V100R005C00

|

|

Filesystem Software

|

VxFS

|

Server Tuning

|

Name

|

Value

|

Description

|

|

nfsd number

|

380

|

The number of NFS threads has been increased to 380 by editing /proc/fs/nfsd/threads

|

|

read_pref_io

|

1048576

|

Preferred read request size.

|

|

noatime

|

on

|

Mount option added to all filesystems to disable updating of access times.

|

|

vx_ninode

|

9000000

|

Maximum number of inodes to cache.

|

|

write_throttle

|

1

|

The number of dirty pages per file.

|

Server Tuning Notes

None

Disks and Filesystems

|

Description

|

Number of Disks

|

Usable Size

|

|

Dorado5100 storage unit, with 144 400GB SSDs in it, bound into 5+1 RAID5 LUNs

|

144

|

43.2 TB

|

|

S6800T storage unit, with 576 2T SATA HDDs in it, bound into 6+6 RAID10 LUNs

|

576

|

523.0 TB

|

|

Total

|

720

|

566.2 TB

|

|

Number of Filesystems

|

24

|

|

Total Exported Capacity

|

533299 GB

|

|

Filesystem Type

|

VxFS

|

|

Filesystem Creation Options

|

Default

|

|

Filesystem Config

|

Each client can access all the filesystems.

|

|

Fileset Size

|

363318.4 GB

|

Each filesystem consists of three LUNs, one LUN is from one 5+1 RAID5 of Dorado5100, and the other two from 6+6 RAID10 of S6800T. One RAID only has one LUN. The N8500 NAS system can dynamiclly and quickly recongnize hot files, and then migrate them between the Dorado5100 and the S6800T. The filesystem metadata and the hotspot are stored in Dorado5100, and the colder files are stored in the S6800T.

Network Configuration

|

Item No

|

Network Type

|

Number of Ports Used

|

Notes

|

|

1

|

10 Gigabit Ethernet

|

48

|

There are 2 10GE network ports per N8500 engine node, both in use.

|

Network Configuration Notes

All network interfaces were connected to the 10GE network switch(Huawei CE12804).

Benchmark Network

An MTU size of 9000 was set for all connections to the switch.

Processing Elements

|

Item No

|

Qty

|

Type

|

Description

|

Processing Function

|

|

1

|

48

|

CPU

|

CPU for N8500 engine nodes,Intel Six-Core Xeon Processor X5690 3.46GHz,12MB L3 cache

|

VxFS,NFS,TCP/IP

|

|

2

|

48

|

CPU

|

CPU for storage controllers,Intel Quad-core Xeon Processor E5540 2.53GHz,1MB L2 cache

|

block serving

|

Processing Element Notes

Each N8500 NAS Engine Node has two physical processors.

Memory

|

Description

|

Size in GB

|

Number of Instances

|

Total GB

|

Nonvolatile

|

|

Clustered NAS Engine Nodes memory

|

192

|

24

|

4608

|

V

|

|

The storage controllers provide write cache vault. When power failure is detected, a standby bbu will kick in to hold the controller cache contents and controller will flush them back to the vault.

|

96

|

12

|

1152

|

NV

|

|

Grand Total Memory Gigabytes

|

|

|

5760

|

|

Memory Notes

Each storage array has dual disk array controller units that work as an active-active failover pair.

Stable Storage

The storage controller provide write cache vault. When power failure is detected, a standby bbu will kick in to hold the controller cache contents and controller will flush them back to the vault.

System Under Test Configuration Notes

Other System Notes

Test Environment Bill of Materials

|

Item No

|

Qty

|

Vendor

|

Model/Name

|

Description

|

|

1

|

24

|

Huawei

|

RH2288

|

48GB RAM and Red Hat Enterprise Linux Server release 5.5

|

Load Generators

|

LG Type Name

|

RH2288

|

|

BOM Item #

|

1

|

|

Processor Name

|

X86 series-FCLGA2011-2200MHz-0.9V-64bit-95000mW-SandyBridge-EP Xeon E5-2660-8Core

|

|

Processor Speed

|

2.2 GHz

|

|

Number of Processors (chips)

|

2

|

|

Number of Cores/Chip

|

8

|

|

Memory Size

|

48 GB

|

|

Operating System

|

Red Hat Enterprise Linux Server release 5.5

|

|

Network Type

|

10 Gigabit Ethernet

|

Load Generator (LG) Configuration

Benchmark Parameters

|

Network Attached Storage Type

|

NFS V3

|

|

Number of Load Generators

|

24

|

|

Number of Processes per LG

|

576

|

|

Biod Max Read Setting

|

8

|

|

Biod Max Write Setting

|

8

|

|

Block Size

|

AUTO

|

Testbed Configuration

|

LG No

|

LG Type

|

Network

|

Target Filesystems

|

Notes

|

|

1..24

|

LG1

|

10 Gigabit Ethernet

|

/vx/fs01,/vx/fs02,/vx/fs03,...,/vx/fs24

|

N/A

|

Load Generator Configuration Notes

All 24 filesystems were mounted over 10GE by every load generator.

Uniform Access Rule Compliance

Each client can access all 24 filesystems from the same 10GE network switch. All the 24 filesystems are cluster mounted on all the 24 engine nodes, and each node exports all 24 filesystems. There are 24 shares on each engine node. Each engine node has a dual-port 10GE NIC, both ports are used, totally 48 IP addresses. Each filesystem is accessed over all data network interfaces (ip addresses ip1...ip48). 24 IP addresses are selected per client for mounting each filesystem, and the 24 IP addresses are selected in a round-robin manner from the list of 48 IP addresses such that each successive client used the next 24 ip addresses in the series. For example, for client 1,the mount points are ip1:/fs01,ip2:/fs01, ...ip12:/fs01, ip1:/fs02, ip2:/fs02, ...ip12:/fs02,... ,ip1:/fs24,...,ip12:/fs24, ip13:/fs01,ip14:/fs01, ...ip24:/fs01, ip13:/fs02, ip14:/fs02, ...ip24:/fs02,... ,ip13:/fs24, ip14:/fs24,...ip24:/fs24, so totally for client1, there are 576 RPOCs. For client2, repeat the same pattern with ip24 to ip48. For client 3, started with ip1 to ip24 again. This ensures that data access to every filesystem is uniformly distributed across all clients and target IP addresses. The 24 filesystems were striped evenly across all the disks from the storage units.

Other Notes

There were 24 filesystems: fs01, fs02, ..., fs24. All of them were cluster mounted on all the 24 server nodes. Each server node in the cluster exported all 24 filesystems. SpecSFS clients connected to a 10GE network switch to gain access to all the 24 server nodes in the cluster, and mapped all 24 NFS shares. Each specSFS client was able to do read/write IOs to all the 24 filesystems in a unified way with this setup.

Config Diagrams

Generated on Wed Oct 03 10:38:21 2012 by SPECsfs2008 HTML Formatter

Copyright © 1997-2008 Standard Performance Evaluation Corporation

First published at SPEC.org on 02-Oct-2012