SPEC SFS®2014_database Result

Copyright © 2016-2020 Standard Performance Evaluation Corporation

|

SPEC SFS®2014_database ResultCopyright © 2016-2020 Standard Performance Evaluation Corporation |

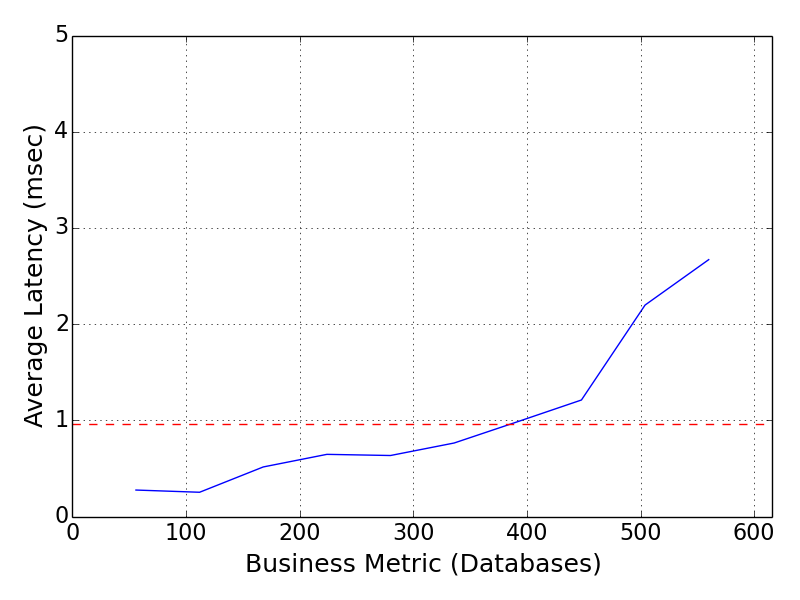

| Oracle | SPEC SFS2014_database = 560 Databases |

|---|---|

| Oracle ZFS Storage ZS7-2 Mid Range Four Tray Hybrid Storage System | Overall Response Time = 0.96 msec |

|

|

| Oracle ZFS Storage ZS7-2 Mid Range Four Tray Hybrid Storage System | |

|---|---|

| Tested by | Oracle | Hardware Available | November 13, 2018 | Software Available | December 13, 2019 | Date Tested | January 2020 | License Number | 00073 | Licensee Locations | Redwood Shores, CA, USA |

The Oracle ZFS Storage ZS7-2 Mid-Range system is a cost-effective, unified

storage system that is ideal for performance-intensive, dynamic workloads. This

enterprise-class storage system offers both NAS and SAN capabilities with

industry-leading Oracle Database integration, in a highly available, clustered

configuration.

The Oracle ZFS Storage ZS7-2 provides simplified

configuration, management, and industry-leading storage Analytics. The

performance-optimized platform leverages specialized read and write flash

caching devices in the hybrid storage pool configuration, optimizing

high-performance throughput and latency. The clustered Oracle ZFS Storage ZS7-2

Mid-Range system scales to 2.0TB Memory, includes 36 CPU cores per controller

and 8 PB of disk storage. The Oracle ZFS Storage Appliance delivers excellent

value with integrated data services for file and block-level protocols with

connectivity over InfiniBand, 32GB FC, 40GbE, 25GbE, and 10GbE. Data can be

compressed, deduplicated, encrypted, snapshot, and replicated. Data is

protected by an advanced data integrity architecture and provides four RAID

redundancy options to optimize different workloads.

| Item No | Qty | Type | Vendor | Model/Name | Description |

|---|---|---|---|---|---|

| 1 | 2 | Storage Controller | Oracle | Oracle ZFS Storage ZS7-2 Mid Range | Oracle ZFS Storage ZS7-2, 2 x 18-Core 2.3GHz Xeon G-6140 CPU. 512 GB DDR4-2666 LRDIMM. 2 x 10TB SAS3 HGST boot drives. |

| 2 | 16 | Memory | Oracle | Oracle ZFS Storage ZS7-2 | Oracle ZFS Storage ZS7-2, 16 x 64GB DDR4-2666 LRDIMM. Memory is order configurable, a total of 512GB was installed in each storage controller. |

| 3 | 2 | Storage Drive Enclosure | Oracle | Oracle Storage Drive Enclosure DE3-24C | 24 drive slot enclosure, SAS3 connected, 20 x 14TB Western Digital 7200 RPM - SAS-3 Disk Drive, 2 x Western Digital 200GB - SAS-3 SSD, 2 x Western Digital 7.68TB - SAS-3 SSD. Dual PSU. |

| 4 | 2 | Storage Drive Enclosure | Oracle | Oracle Storage Drive Enclosure DE3-24C | 24 drive slot enclosure, SAS3 connected, 24 x 14TB Western Digital 7200 RPM - SAS-3 Disk Drive. Dual PSU. |

| 5 | 88 | SAS3 HDD | Oracle | WDC W7214A520ORA014T | 14TB Western Digital 7200 RPM - SAS-3 Disk Drive. Drive selection is order configurable. A total of 88 x 14TB Western Digital 7200 RPM - SAS-3 Disk Drive drives were installed across all Oracle Storage Drive Enclosure DE3-24C. |

| 6 | 4 | SAS3 SSD | Oracle | WDC HPCAC2DH6ORA200G | Western Digital 200GB - SAS-3 Solid State Disk. Drive selection is order configurable, a total of 4 x Western Digital 200GB - SAS-3 Solid State Disk drives were installed across two Oracle Storage Drive Enclosure DE3-24C. These Drives are used for write accelerators. |

| 7 | 4 | SAS3 SSD | Oracle | WDC HPCAC2DH2ORA7.6T | Western Digital 7.68TB - SAS-3 Solid State Disk. Drive selection is order configurable, a total of 4 x Western Digital 7.68TB - SAS-3 Solid State Disk drives were installed across two Oracle Storage Drive Enclosure DE3-24C. These drives are used for ZFS L2 Adaptive Replacement Cache. |

| 8 | 2 | Client | Oracle | Oracle S7-2 | Oracle S7-2 Client Node, 2 x SPARC S7 8-Core, 4.27GHz processors. 256GB RAM. 2 x (2 x 25GbE). Used for benchmark load generation. |

| 9 | 2 | OS Drive | Oracle | HGST H101812SFSUN1.2T | HGST 1.2TB - 10000 RPM - SAS-3 Disk Drive. 2 x 1.2TB - 10000 RPM - SAS-3 Disk Drive, one for each Oracle S7-2 Client Node was installed for OS boot drive. |

| 10 | 1 | Switch | Arista | Arista DCS-7060CX-32S | Arista DCS-7060CX-32S, high-performance, low-latency 100/40/10/25/50 Gb/sec Ethernet switch. |

| 11 | 8 | Network Interface Card | Oracle | Dual 10/25-Gigabit SFP28 Ethernet | Dual 10/25-Gigabit SFP28 Ethernet HBA |

| Item No | Component | Type | Name and Version | Description |

|---|---|---|---|---|

| 1 | Oracle ZFS Storage | Storage Controller OS | 8.8.9 | Oracle ZFS Storage OS Firmware. |

| 2 | Solaris | Workload Client OS | Solaris (11.4,5.11-11.4.16.0.1.2.0) | Workload Client Operating System |

| Oracle ZFS Storage ZS7-2 | Parameter Name | Value | Description |

|---|---|---|

| MTU | 9000 | Network Jumbo Frames | Oracle S7-2 Client Node | Parameter Name | Value | Description |

| MTU | 9000 | Network Jumbo Frames |

The Solution Under Test has 25GbE Ethernet ports set to MTU 9000. S7-2 workload clients have power management software controls for SPARC processor power states. This control is set to administrative-authority equal "none" via the Solaris poweradm cli, which prevents CPU power throttling.

| Oracle S7-2 Client Nodes | Parameter Name | Value | Description |

|---|---|---|

| vers | 3 | NFS mount option set to version 3 |

| rsize, wsize | 1048576 | NFS mount option for the read and write buffer size. |

| forcedirectio | forcedirectio | NFS mount option set to forcedirectio |

| rpcmod:clnt_max_conns | 4 | Increases the number of NFS client connections from default of 1 to 4. |

Best practices settings for network and NFS client and the Oracle ZFS Storage using the 25GbE Ethernet for optimized performance includes setting the workload client NFS mounts of the Oracle to use forcedirectio, and read and write NFS buffer sizes to 1048576 bytes each.

None

| Item No | Description | Data Protection | Stable Storage | Qty |

|---|---|---|---|---|

| 1 | 88 x 14TB HDD Oracle ZFS Storage ZS7-2 Data Pool Drives | RAID-10 | Yes | 88 |

| 2 | 4 x 200GB SSD Oracle ZFS Storage ZS7-2 Log Drives | None | Yes | 4 |

| 3 | Western Digital 7.68TB - SAS-3 Solid State Disk ZS7-2 Read Cache Drives | None | Yes | 4 |

| 4 | 10TB HGST Oracle ZFS Storage ZS7-2 OS Drives | Mirrored | No | 4 |

| 5 | HGST 1.2TB - 10000 RPM - SAS-3 Disk Drive S7-2 Client Node OS Drives | None | No | 2 |

| Number of Filesystems | 2 | Total Capacity | 467 TiB | Filesystem Type | ZFS |

|---|

Two ZFS storage pools are created overall in the Solution Under Test (one

storage pool per Oracle ZFS Storage ZS7-2 controller). Each storage pool is

configured with 42 HDD drives, 2 write accelerator SSDs (log devices), 2 L2

Adaptive Replacement Cache SSDs (read cache), and 2 hot spare HDDs. The storage

pools are configured via the administrative browser interface. Each storage

controller assigns half the disk drives, log devices, and cache devices to be

used. Next, the storage pools profiles are set to mirror (RAID-10) across the

42 data HDDs drives. The log profile and cache device profiles used are set to

be striped. The log construct in each storage pool is the ZFS Intent Log (ZIL)

for the pool. The cache construct is the ZFS L2 Adaptive Replacement Cache for

the pool. Each storage pool is configured with one ZFS filesystem share. Since

each controller is configured with one storage pool and each storage pool

contains one ZFS filesystem share, the Solution Under Test has two ZFS

filesystem shares (2 NFS shares).

There are 2 internal mirrored system

disk drives per Oracle ZFS Storage ZS7-2 controller and are used only for the

controllers NAS operating system. These drives are exclusively used for the NAS

Firmware and do not cache or store user data.

The Oracle S7-2 workload

clients mount the two NFS shares across four 25GbE networks such that each

client accesses the two NFS shares via four network paths over eight NFS mounts

(see Solution Under Test diagram).

All filesystems on both Oracle ZFS Storage ZS7-2 controllers are created with a setting of the Database Record Size of 32KB. The logbias setting is set to latency (the default value) for each filesystem. These standard settings are controlled through the Oracle ZFS Storage administration using the Administration browser or cli interfaces.

| Item No | Transport Type | Number of Ports Used | Notes |

|---|---|---|---|

| 1 | 25GbE Ethernet | 8 | Each Oracle ZFS Storage ZS7-2 Controller is networked via 4 x 25GbE Ethernet physical ports for data. |

| 2 | 10GbE Ethernet | 2 | Each Oracle ZFS Storage ZS7-2 Controller uses 1 x 10GbE Ethernet physical port for NAS configuration and management. |

| 3 | 25GbE Ethernet | 8 | Each Oracle S7-2 Client Node uses is networked via 4 x 25GbE Ethernet physical ports for data. |

| 4 | 10GbE Ethernet | 2 | Each Oracle S7-2 Client Node uses 1 x 10GbE Ethernet physical port for configuration and management. |

Each Oracle ZFS Storage controller uses 4 x 25 GbE Ethernet ports for a total of 8 x 25 GbE ports. In the event of a controller failure, IP address will be taken over by the surviving controller. All 25 GbE ports are set to MTU 9000. There is 1 x 10GbE port per controller assigned to the administration interface, this interface is only used to manage the controller and does not take part in data services in the Solution Under Test.

| Item No | Switch Name | Switch Type | Total Port Count | Used Port Count | Notes |

|---|---|---|---|---|---|

| 1 | Arista DCS-7060CX-32S | 100/40/10/25/50 Gb/sec Ethernet Switch | 128 | 16 | All ports set for MTU 9000. Port count based on 25GbE. |

| Item No | Qty | Type | Location | Description | Processing Function |

|---|---|---|---|---|---|

| 1 | 4 | CPU | Oracle ZFS Storage ZS7-2 | 2 x 18-Core 2.3GHz Xeon G-6140 CPU | ZFS, TCP/IP, RAID/Storage Drivers, NFS |

| 2 | 4 | CPU | Oracle S7-2 Client Node | 2 x SPARC V9 8-Core, 4.27GHz processors | TCP/IP, NFS |

Each Oracle ZFS Storage ZS7-2 controller contains 2 physical processors, each

with 18 processing cores.

Oracle S7-2 client contains 2 physical

processors, each with 8 processing cores.

| Description | Size in GiB | Number of Instances | Nonvolatile | Total GiB |

|---|---|---|---|---|

| Memory in Oracle ZFS Storage ZS7-2 | 477 | 2 | V | 954 |

| Memory in Oracle S7-2 clients | 238 | 2 | V | 476 | Grand Total Memory Gibibytes | 1430 |

The Oracle ZFS Storage controllers' main memory is used for the ZFS Adaptive

Replacement Cache (ARC is a read data cache), as well as operating system

memory.

Oracle S7-2 client memory is not used for storage or cache of

the Oracle ZFS Storage ZS7-2 controllers, only for the client OS.

The Stable Storage requirement is guaranteed by the ZFS Intent Log (ZIL) which logs writes and other filesystem changing transactions to the stable storage of write flash accelerator SSDs or HDDs depending on the configuration. The Solution Under Test uses write flash accelerator SSDs. Writes and other filesystem changing transactions are not acknowledged until the data is written to stable storage. The Oracle ZFS Storage Appliance is an active-active cluster high availability system. In the event of a controller failure or power loss, each controller can take over for the other. Write flash accelerator SSDs and/or HDDs are located in shared disk shelves and can be accessed via the 4 backend SAS-3 channels from both controllers, the remaining active controller can complete any outstanding transactions using the ZIL. In the event of power loss to both controllers, the ZIL is used after power is restored to reinstate any writes and other filesystem changes.

The ZS7-2 component is the Oracle ZFS Storage ZS7-2 Mid Range in an active-active failover configuration.

A non-default DATABASE WARMUP_TIME=900 was used.

Please reference the Solution Under Test diagram. The 2 Oracle S7-2 workload clients are used for benchmark load generation. The Oracle S7-2 workload clients each mount 2 of the total 2 filesystems provided by the Oracle ZFS Storage cluster via NFSv3. One filesystem is shared from each Oracle ZFS Storage controller. Each of the two Oracle ZFS Storage controllers has 4 x 25GbE Ethernet ports for data service, all ports are assigned separate sub-networks. Each Oracle S7-2 workload client has 4 x 25GbE Ethernet ports, mounting each NFS share four times over four networks. This is diagramed in the Solution Under Test diagram.

Oracle and ZFS are registered trademarks of Oracle Corporation in the U.S. and/or other countries. Intel and Xeon are registered trademarks of the Intel Corporation in the U.S. and/or other countries.

The test sponsor attests, as of the date of publication, that CVE-2017-5754 (Meltdown), CVE-2017-5753 (Spectre variant 1), and CVE-2017-5715 (Spectre variant 2) patches are disabled in the system as tested and documented. There is support turning this protection off or on, it is disabled (off) for this Solution Under Test which is also the product default. These mitigations may be enabled through the standard administrative interface. There may be performance impacts when these patches are enabled.

Generated on Fri Mar 6 18:31:16 2020 by SpecReport

Copyright © 2016-2020 Standard Performance Evaluation Corporation