Why would a cloud computing company

use the SPEC CPU2017 benchmark suite?

by Bob Cramblitt

July 2017

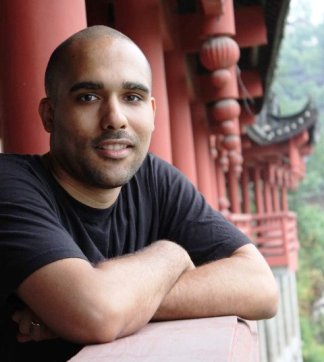

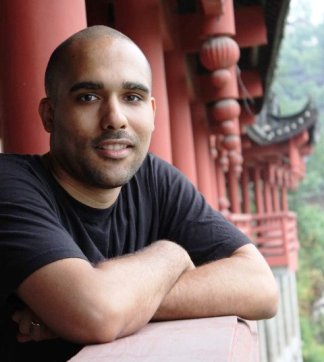

Michael Hines, a senior engineer at DigitalOcean, is not your typical user of a SPEC CPU benchmark suite. He's not a high-end server user, a hardware system vendor, a researcher or an independent software vendor (ISV). Yet, he still finds the new SPEC CPU2017 benchmark suite critically important for the work he does at DigitalOcean.

DigitalOcean describes itself as "a company that makes it simple for developers to build great software in the cloud." The company reports having approximately one million registered users with more than 50,000 active teams since it was founded in 2011.

Hines is a two-time winner of the SPECtacular award, given to SPEC members who go above and beyond the call of duty in their volunteer work for the benchmarking consortium. He won the awards for his work in helping to find, fix and test issues in the SPEC Cloud_IaaS 2016 benchmark.

Hines' primary job with DigitalOcean is similar to what he does for SPEC: He works on the performance and reliability of hypervisors -- the systems that create and monitor virtual machines -- in DigitalOcean's cloud. This includes benchmarking, analysis and improvement of the kernel, virtual switching, virtual machines, and how certain applications run on the company's systems. That's where SPEC CPU2017 comes in.

Directly influencing purchasing

"We use SPEC CPU2017 to directly influence purchasing decisions we make for our hypervisors," says Hines. "The CPU-specific limitations in most modern hypervisors have largely eliminated the CPU-bound overheads associated with virtualization. Thus, we felt quite comfortable comparing one virtual machine to another in isolation. While we're not a server vendor, the all-in-one nature of SPEC CPU2017 made this comparison of one vendor to another very easy and very simple."

Hines believes the competitive nature of the SPEC membership provides a checks and balances that leads to a more equitable benchmark suite.

"By developing benchmarks in a committee format and allowing the incorporation of open-source software when necessary, you get a near absolute guarantee of fairness from the operation and scoring of the benchmark. Time and time again, we've seen third-parties create benchmarks either too fast or without sufficient community input. SPEC's rigorous format takes the fear out of benchmark development and use."

Wide coverage of application areas

Although SPEC CPU2017 is not explicitly designed for evaluating hypervisors, Hines thinks it hits upon most of the key performance aspects that are valuable to him and DigitalOcean.

"When choosing a price-point on a server, the processor and its interaction with the rest of the design of the server is a major business decision. The benchmark helps us make that decision. As a cloud platform provider, we have no definite way of knowing what types of applications our users are going to run on the servers that we buy, but SPEC CPU2017 spans many different application areas of computing. That allows us to trust that we have covered as many of the special cases in which a customer might utilize our hardware as possible."

The ability of SPEC CPU2017 to enable engagement at different levels is also valuable to Hines.

"If you're communicating with a higher-level team or a vendor, you can examine the simplified average scores and raise concerns before making a purchase or bringing a product online. If you're debugging raw performance or a customer issue and you need to understand the particular behavior of some part of the CPU instruction set or interactions with main memory, you can dive into the detailed results and optionally run those programs in isolation from a particular application area."

"Nothing comes close"

There are myriad CPU benchmarks out there, most of which are synthetic and some of which are cheap or free, but to Hines there is nothing that compares to SPEC CPU2017.

"Quite honestly, nothing comes close. If even a synthetic benchmark did come close to covering a similar number of application areas, it's scoring and set of run rules would still likely fall by the wayside. SPEC CPU2017 encompasses all of the modern conveniences of the SPEC run rules, including documenting CPU compiler flags, minimum numbers of iterations, quality of service of the applications themselves, reporting, portability and so forth. If a benchmark does not start with these bare minimums, it should not be trusted. Like most of SPEC's benchmarks, SPEC CPU2017 does all of those things and then some."

###

The SPEC CPU2017 benchmark suite is now available for downloading on this website. The all-new version features updated and improved workloads, use of OpenMP to accommodate more cores and threads, and an optional metric for measuring power consumption.

Bob Cramblitt is a freelance writer and SPEC's communications director. The opinions stated in this article do not necessarily reflect those of the SPEC organization.

|